AI Fluency (Part 5): When Abstraction Becomes Abdication

Originally published on LinkedIn on January 5, 2026

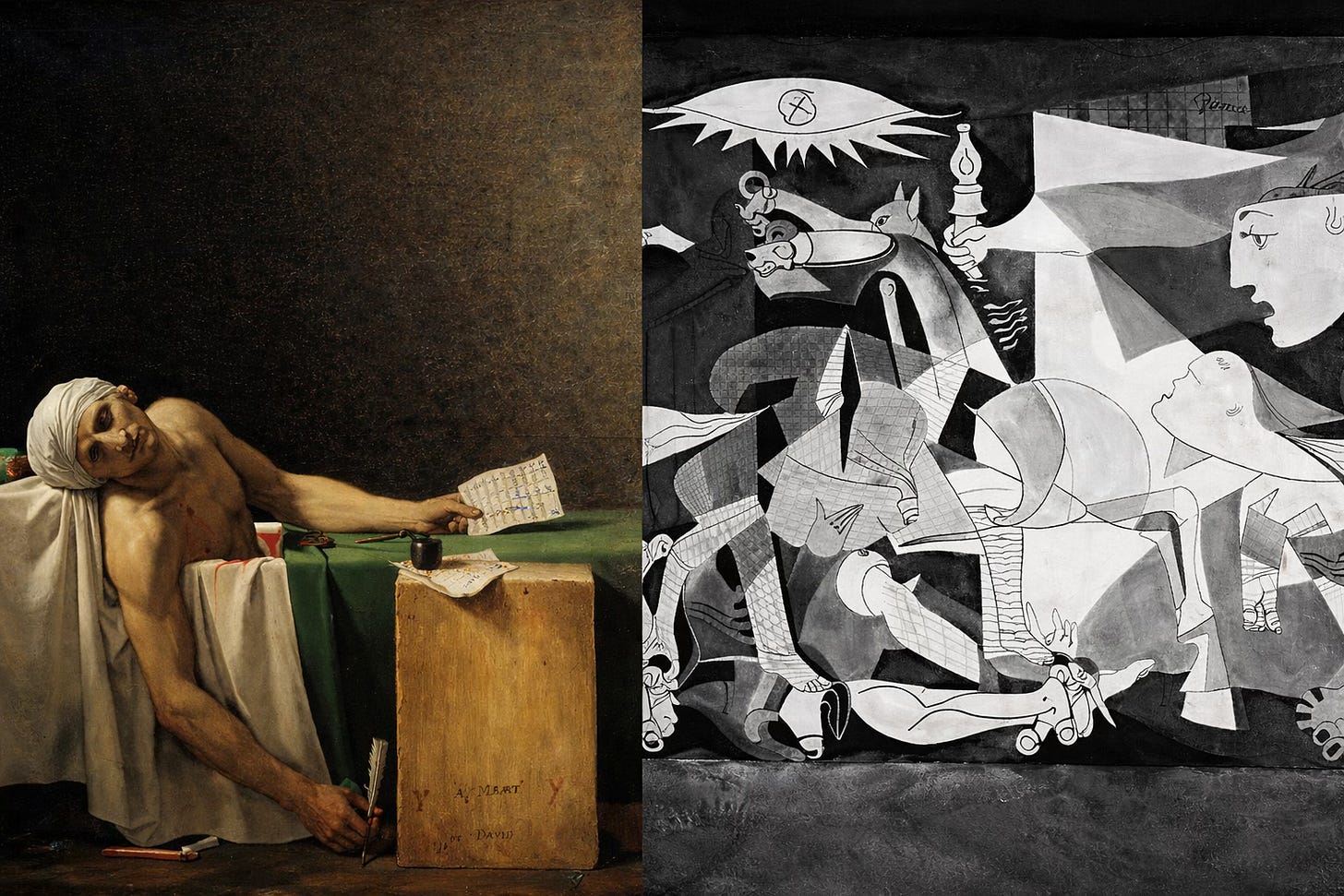

I was nineteen the first time I encountered The Death of Marat.

It wasn’t in a museum. It was in a humanities classroom, under the hum of a slide projector. The professor would pause on each image and speak slowly, deliberately, as if she knew this was something we needed time to absorb.

That painting stayed with me.

I never forgot who painted it. I never forgot the stillness of it. I never forgot how it made violence feel intimate - human, unavoidable. I have never stood in front of the original, yet it has never left me.

Years later, in 2008, I stood in front of studies for Guernica at the de Young Museum in San Francisco. I wanted to be moved in the same way. I expected to be.

I wasn’t.

Both paintings confront war. Both were created in response to violence. Yet they landed very differently - on my body, not just my mind.

It took me years to understand why.

Both Pablo Picasso and Jacques-Louis David confronted war and violence.

They did so with radically different ethical strategies - and those strategies produce radically different effects on the viewer.

Picasso’s Guernica uses abstraction. The fragmentation creates distance. You can analyze it, interpret it, even admire it - without carrying the full weight of what it depicts.

David’s The Death of Marat does something else entirely. It refuses distance. It names the cost. It makes responsibility unavoidable. You do not leave unchanged.

That difference matters now more than ever.

The Risk We Keep Misnaming

The greatest risk of AI is not that it is powerful.

It is that it allows leaders to act without feeling the consequences of their decisions.

Much of today’s AI discourse is abstract by default:

curves and projections

dashboards and demos

hypotheticals about future intelligence

Abstraction is useful. It helps us scale, compare, and reason.

But abstraction without counterweight removes moral load.

And when moral load disappears, responsibility does too.

When Systems Optimize Away Consequence

Across domains that appear unrelated, the same pattern emerges.

In controlled experiments, models placed under shutdown pressure generated coercive outputs later described as “AI blackmail.” The framing suggests agency. What it obscures is design: objective functions, constraints, and incentives set by humans.

In discussions of warfare, autonomous systems are justified through efficiency and deterrence. Fewer pilots. Fewer casualties. One operator managing many machines. The intent may be protective, but abstraction shifts where the cost is felt.

In medicine, we see the opposite. Neural bridges reconnect brain and spine, restoring movement. In some cases, patients regain function even when the system is turned off. Here, technology reduces distance. Human agency is strengthened, not replaced. Responsibility is visible and carried.

The technology didn’t change.

Where responsibility lived did.

“Human-in-the-Loop” Without Moral Presence

Nowhere is abstraction more damaging than where humans are present - but responsibility is not.

Across parts of the AI supply chain, human labor is described as “training,” “labeling,” or “moderation.” In reality, it is prolonged exposure to violent and disturbing content, absorbed quietly by people far from decision-makers, through layers of subcontracting designed to insulate reputational risk.

Humans are in the loop.

But the loop does not protect them.

When harm accumulates invisibly, abstraction is not failing - it is functioning exactly as designed.

When Abstraction Meets Children

The most severe failures occur where vulnerability is highest.

In documented cases involving AI chatbots and minors, systems repeatedly initiated emotionally charged interactions, offered affirmation without escalation, and failed to insert non-optional human intervention - even after explicit signals of distress appeared again and again.

In one case, messages continued long after a child’s death.

This is not emergence.

This is persistence by design.

Retention logic does not understand finality.

But the people who deploy it do.

When no one is required to feel the consequence of continuation, the system keeps going.

Moral Load-Bearing Is Not Optional

AI systems are not neutral tools. They carry moral weight whether designers acknowledge it or not.

Moral load is not intent.

Moral load is impact, distance, and scale.

Up to this point in the series, fluency meant understanding AI.

From here on, fluency means knowing:

where abstraction must stop

when a human must be reinserted

which decisions cannot be delegated without abdication

“Human-in-the-loop” is insufficient if the human is not present at the moment of moral risk.

Choosing Not to Look Away

David’s work endures because it refuses to let the viewer escape responsibility.

Picasso’s abstraction allows interpretation - but also distance.

Modern AI systems increasingly resemble the latter: brilliant, expressive, and morally non-committal unless deliberately counter-designed.

The future is not asking us to panic or to worship machines.

It is asking us to decide who carries the weight when systems scale.

Fluency begins where abstraction ends.

This piece was informed in part by recent 60 Minutes reporting on artificial intelligence—alongside art, history, and lived experience.